by ezs | Feb 20, 2021 | evilzenscientist

Sigh.

Tag taxonomy cleanup.

Another great example of “hands off keyboards” and needing to deliver via automation. Avoid errors, enforce validation of metadata.

Azure Resource Graph explorer – find the scope and scale of the problem. I’ll add the usual gripe around tags being case sensitive in some places (API, PowerShell) and not in others (Azure Portal!).

Resources

| where tags.businesowner != ”

| project name, subscriptionId, resourceGroup, tags.businessowner, tags.businesowner

by ezs | Feb 15, 2021 | evilzenscientist

Weekend – half a million dead in the US.

Usual weekly update from The Seattle Times. Positive rates are down, folk are becoming complacent again. Continued discussion on back to school.

Friday – another variant in Japan, it’s a race. Dr Fauci says “back to normal by Christmas”.

Thursday – AstraZeneca vaccine reluctance. Rich/Poor divide on vaccine distribution.

Wednesday – Washington State daily cases below 600 for the first time in many months. Daily positive tests down to 6.5%. 10.7% of population have had first vaccine shot. Vaccine production is now the bottleneck. It’s a race against variants/mutations.

Tuesday – more mutations, one of these could be the escapee and massive impact. Real concern that antibiotic use during COVID is accelerating the risk of AMR.

Monday – we’re going to see a lot of “this time last year” posts in the media. Between late January 2020 and late February there was news coverage about the first case in the US – and increasing concern in China and across SE Asia about spread. My post from mid-March 2020 describing this time. Still in the office at that point.

https://www.theguardian.com/world/series/coronavirus-live

https://www.seattletimes.com/tag/coronavirus/

by ezs | Feb 12, 2021 | evilzenscientist

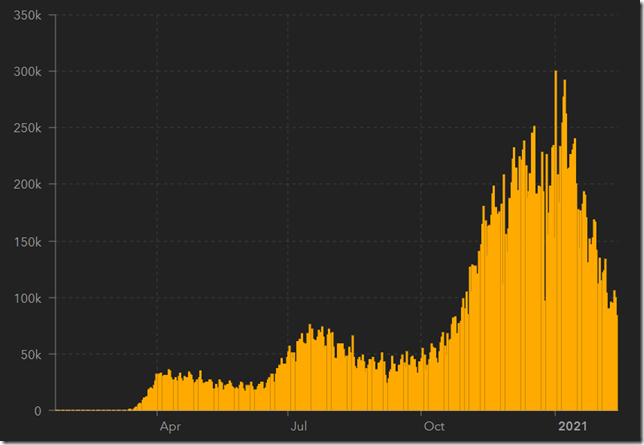

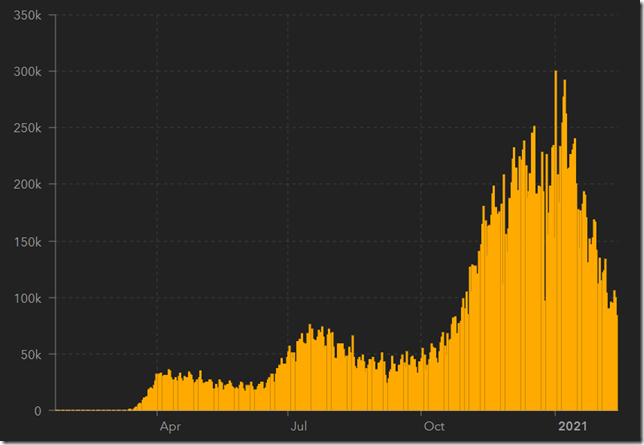

Weekend – cases declining in Washington State, positive rate is relatively stable at just under 8%.

US Daily reported cases below 100k/day for the first time since the end of October 2020. Data from JHU.

Weekend numbers from The Seattle Times.

Friday – UK hits 21% of population with at least one vaccine shot.

Thursday – WHO continue to search for the origin of this coronavirus. 600M vaccines by July for US. Population should be vaccinated by summer.

Wednesday – as vaccines continue to be rolled out, counties, countries are starting to open back up.

Tuesday – “Wuhan lab leak” unlikely to be source of coronavirus. Continued border closures as governments try to extinguish spread.

Monday – UK variant of COVID-19 is rapidly spreading in the US. Slowly vaccines are getting delivered.

https://www.theguardian.com/world/series/coronavirus-live

https://www.seattletimes.com/tag/coronavirus/

by ezs | Feb 10, 2021 | evilzenscientist

Using KQL against Azure Resource Graph; and here’s another useful snippet – it’s faster by far than PowerShell, subsecond result set.

RecoveryServicesResources

| join kind=leftouter (ResourceContainers | where type==’microsoft.resources/subscriptions’ | project SubName=name, subscriptionId) on subscriptionId

| where type == “microsoft.recoveryservices/vaults/backupjobs”

| where properties.backupManagementType == “AzureIaasVM”

| extend rsg = replace(“;[[:graph:]]*”, “”, replace(“iaasvmcontainerv2;”,””,tostring(properties.containerName)))

| extend vmname = tolower(tostring(properties.entityFriendlyName))

| extend rsvault = tolower(replace(“/[[:graph:]]*”,””, replace(“[[:graph:]]*\\/vaults\\/”,””,id)))

| extend backupage = datetime_diff(‘day’, now(), todatetime(properties.endTime))

| project SubName, rsg, vmname, rsvault, properties.operationCategory, properties.status, backupage

by ezs | Feb 7, 2021 | evilzenscientist

Back to “Azure Grim Reaper” – flagging and deleting unused storage in Azure.

There is no “nice” way to do this – the Azure Metrics help a lot.

Here’s some code that enumerates a list of subscriptions and pulls out storage accounts, transactions over a period of time, count of storage containers, tables and queues – and reads tags.

It’s generic enough for use in most places.

# setup

#import-module az

#import-module az.storage

# creds

#connect-azaccount

#

# set up array of subs

$subs= ‘<subscription ID>’, ‘<subscription ID>’

# today

$nowdate = Get-Date

#initialise output

$stgdata =@()

Write-Host “Enumerating” $subs.count “subscription(s)”

# loop through the subscription(s)

foreach ($subscription in $subs) {

# in subscription, next read the storage accounts

#set context

write-host “Switching to subscription” $subscription

set-azcontext -subscription $subscription |out-null

write-host “enumerating storage accounts”

$stgacclist = get-azstorageaccount

write-host “Total of” $stgacclist.count “storage accounts in subscription” $subscription

# loop through each storage account

foreach ($stgacc in $stgacclist) {

# in storage account, get all storage containers, tables and queues

# stgacc entity has StorageAccountNme and ResourceGroupName and tags

set-azcurrentstorageaccount -Name $stgacc.StorageAccountName -ResourceGroupName $stgacc.ResourceGroupName

write-host “enumerating storage account ” $stgacc.StorageAccountName ” in resource group” $stgacc.ResourceGroupName

write-host “storage containers”

$stgcontainers = get-azstoragecontainer

write-host “table service”

$tblservice = get-azstoragetable

write-host “queue service”

$qservice = get-azstoragequeue

#get transactions

$transactions = Get-AzMetric -ResourceId $stgacc.id -TimeGrain 0.1:00:00 -starttime ((get-date).AddDays(-60)) -endtime (get-date) -MetricNames “Transactions” -WarningAction SilentlyContinue

write-host “60 days of transactions” $(($transactions.Data | Measure-Object -Property total -Sum).sum)

#reset usage array

$lastused = @()

#loop through each blob service container

foreach ($container in $stgcontainers) {

# in blob storage container

write-host “collecting blob storage data”

$lastused += [PSCustomObject]@{

Subscription = $subscription

ResourceGroup = $stgacc.ResourceGroupName

StorageAccount = $stgacc.StorageAccountName

StorageContainer = $container.name

LastModified = $container.lastmodified.Date

Age = ($nowdate – $container.LastModified.Date).Days

}

#let’s find any unmanaged disks in the container

$allblobs = get-azstorageblob -container $container.name

$vhdblobs = $allblobs | Where-Object {$_.BlobType -eq ‘PageBlob’ -and $_.Name.EndsWith(‘.vhd’)}

}

write-host “Total of ” $stgcontainers.count “containers. Minimum age ” ($lastused.Age |Measure -Minimum).minimum “maximum age” ($lastused.Age |Measure -Maximum).maximum

#append to the array

$stgdata += [PSCustomObject]@{

Subscription = $subscription

ResourceGroup = $stgacc.ResourceGroupName

StorageAccount = $stgacc.StorageAccountName

Transactions = $(($transactions.Data | Measure-Object -Property total -Sum).sum)

ContainerCount = $stgcontainers.Count

TableCount = $tblservice.Count

QueueCount = $qservice.Count

AgingMin = ($lastused.Age |Measure -Minimum).minimum

AgingMax = ($lastused.Age |Measure -Maximum).maximum

vhd = $vhdblobs.count

blobs = $allblobs.count

#tag metadata

itowner = $stgacc.tags.itowner

businessowner = $stgacc.tags.businessowner

application =$stgacc.tags.application

costcenter = $stgacc.tags.costcenter

}

}

}

#output to csv

$stgdata | export-csv <somelocation>\storageacc.csv -force -NoTypeInformation

Recent Comments